Yahboom AI Embodied Intelligence Robot Dog for Raspberry Pi, 15 Joint Programming Bionic Robot Dog (with RPi CM4)

Color:

With Rpi Cm4

With Rpi Cm4

With Rpi Cm5

En stock

1.28 kg

Sí

Nuevo

Amazon

USA

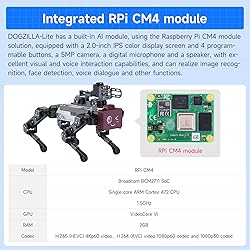

- Redefining smart companions: When robot dogs "learn to think" DOGZILLA-Lite is the world's first educational robot dog that integrates multimodal large models + embodied intelligence. It has a built-in Raspberry Pi module and supports multiple A-vision functions such as face detection and object recognition. The joints use 2.3KG·CM bus serial port servos, which can achieve omnidirectional movement, six-dimensional posture control, posture stability and multiple motion gaits. It is not only a walking robot, but also an AI partner that can understand images, voices, environments, and make autonomous decisions - from executing simple instructions such as "finding red blocks" to completing complex tasks such as "recognizing the owner and dancing", opening a robot interaction revolution. Combining the robot arm and the body movements to complete complex embodied intelligent applications is an ideal platform for you to explore AI and robotics technology. Safety Instructions The robot arm is designed to be lightweight and is limited to grabbing standard EVA blocks/balls. Do not use it for other heavy objects.

Producto no disponible

Este producto no está permitido por la aduana del país en categoria 4x4

Conoce más detalles

【The first AI LLM + embodied intelligent robot dog】DOGZILLA-Lite is the world's first educational robot dog that integrates multimodal large models + embodied intelligence. Built-in Raspberry Pi module supports multiple AI visual functions such as face detection and object recognition. It is not only a walking robot, but also an AI partner that can understand images, voices, environments, and make autonomous decisions. 【Robot arm expansion & AI vision technology】Supports the extension of a 3DOF robotic arm, enabling autonomous object grasping and handling. A pre-programmed GUI system with built-in AI vision/voice programs enables numerous exciting functions such as 3D object recognition, color, face, emotion recognition, and motion detection, providing endless possibilities for creative projects. Note: The robotic arm is limited to grasping the standard EVA cubes/balls. 【Multiple control methods and real-time images】You can easily control DOGZILLA-Lite through the XGO APP and PC software for Android and iOS devices. In addition, DOGZILLA-Lite can also transmit real-time images to the application, bringing a first-person perspective control experience. Yahboomrobot can control the movement of the robot dog and support video images, but cannot control the robotic arm. 【Gait planning, free adjustment】DOGZILLA-Lite integrates inverse kinematics algorithms to accurately control the ground contact time, lift time and lift height of each leg. You can easily adjust these parameters to achieve different gaits. Provides detailed inverse kinematics analysis and source code of inverse kinematics functions. 【Why choose DOGZILLA-Lite? 】It is not just a toy, but a ticket to the future. Students use it to understand AI principles, geeks use it to develop autonomous driving algorithms, and families use it as an interactive technology partner. Yahboom provides AI visual interaction, Open CV, AI LLM open source data code and technical support.

Productos Relacionados

Ver másOtros Productos

Ver másCompra protegida

Disfruta de una experiencia de compra segura y confiable

¿Cómo comprar?

Información de Aduanas Ecuador

En Tiendamia puedes hacer tus compras a través de la categoría B (4x4) y la categoría C. No tendrás que hacer trámites de aduana. Hacemos todo por ti.

- El 4x4 (o categoría B)

- a. No paga impuestos ecuatorianos.

- b. Tienes una cantidad ilimitada de órdenes en el régimen 4x4 siempre que cada una de estas esté dentro del peso máximo permitido (hasta 4 kg) y no superen los $400, además de cumplir con el cupo anual por persona.

- c. El cupo anual máximo es de $1.600 al año para compras en el exterior a través del régimen 4x4. El cupo anual se limitan a una cédula pero no a tu usuario de Tiendamia.

- d. Se puede comprar una gran variedad de productos dentro de esta categoría siempre y cuando cumplan con el 4x4 y no sean para fines comerciales, por esa razón, se puede comprar máximo 3 productos iguales o similares de la misma categoría. Por ejemplo, puedes comprar hasta 3 perfumes, hasta 3 relojes y hasta 3 pares de zapatos, si te excedes de esta cantidad tu orden puede tener cargos extra por parte de la aduana.

- Las tablets, laptops y celulares se pueden comprar a través de la Categoría C.

- a. Esta categoría sí paga impuestos (IVA + Fodinfa)

- b. Solo se puede comprar un celular nuevo al año, no se admiten refabricados, usados u “open box.”

![]() Garantía de entrega

Garantía de entrega

Con Tiendamia todas tus compras cuentan con Garantía de Entrega o devolución total de tu dinero.

Compras 100% seguras y garantizadas, para que pidas lo que sueñas y lo recibas del mundo a tu puerta.

¿Cómo solicitar una devolución?

Para solicitar una devolución, el cliente debe realizarlo a través de su cuenta de Tiendamia. Este proceso está sujeto a la aprobación del departamento de Devoluciones (lo cual puede demorar de 48hs a 72hs hábiles). En caso de no tener la opción en la web, el cliente debe contactarse con Atención al Cliente para iniciar la solicitud.

Los productos sin devolución son:

- Los productos que tienen un tiempo de entrega mayor a 20 días hábiles.

- Productos que por su naturaleza no admiten devolución en EE.UU. o China y, por lo tanto, Tiendamia no puede ofrecer la devolución al cliente. Ejemplos: perfumes, cremas y medicamentos.

Tarjetas de Débito y Crédito

Visa

Mastercard

American Express

Dinners

Discover

Alias

Pagos a través de PayPal

Compra procesada en dólares con dinero en cuenta o tarjetas internacionales.

PayPal

.svg)